Sony Music Takes Action Against AI-Generated Deepfakes: Over 135,000 Songs Removed

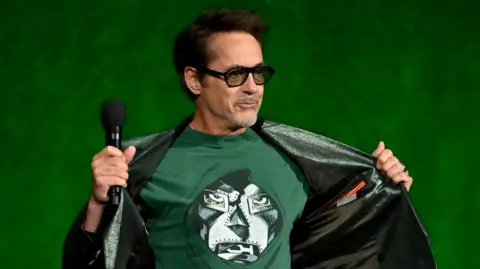

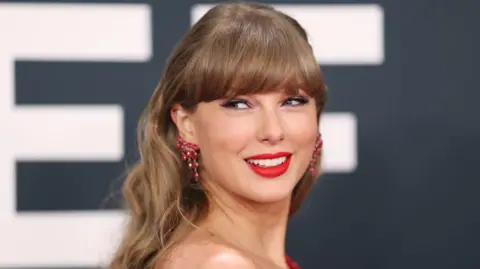

In a significant move to protect its artists, music giant Sony Music has requested the removal of more than 135,000 songs created by fraudsters using deepfake technology. These counterfeit songs have been exploiting the likeness and voice of prominent artists such as Beyoncé, Queen, and Harry Styles on various streaming platforms.

The emergence of these so-called deepfakes not only causes direct commercial harm to legitimate recording artists but also threatens their reputations, particularly those who are currently promoting new work. Dennis Kooker, president of Sony's global digital business, expressed concerns that these deepfakes could undermine the success of genuine release campaigns.

These fraudulent tracks have been increasing as the technology behind artificial intelligence becomes more affordable and accessible. Sony estimates that the 135,000 deepfake songs discovered thus far represent only a fraction of the total number uploaded across different streaming services. Notably, around 60,000 of these songs were identified in just the past year, impacting not only major acts but also artists like Bad Bunny, Miley Cyrus, and Mark Ronson.

Kooker emphasized that these deepfakes are particularly problematic when artists are actively promoting their music, as they capitalize on the demand generated by authentic releases. The existence of such counterfeits risks detracting from artists' legitimate achievements and revenues.

The discussion around deepfakes in the music industry coincided with the launch of the Global Music Report in London, where it was reported that recorded music revenues have seen significant growth, with an increase of 6.4% last year, reaching $31.7 billion. This upswing comes on the heels of streaming subscriptions revitalizing an industry previously plagued by piracy and financial struggle.

As the AI landscape rapidly evolves, the IFPI has raised serious concerns about streaming fraud, a practice wherein ‘fake’ artists upload songs to platforms like Spotify and YouTube to artificially inflate play counts for monetary gain. The development and rise of AI-generated music are believed to have exacerbated this issue.

Industry leaders, including Kooker, are calling for transparency in identifying AI-generated content on streaming platforms, stressing that fans should be able to distinguish between genuine artistry and unauthorized AI outputs. Such transparency is viewed as essential to ensuring the integrity of the music ecosystem going forward.